Cross-Cutting Foundation: Context Optimization

(Context is the substrate of autonomy: engineer it deliberately, budget it explicitly, and govern it as a risk surface)

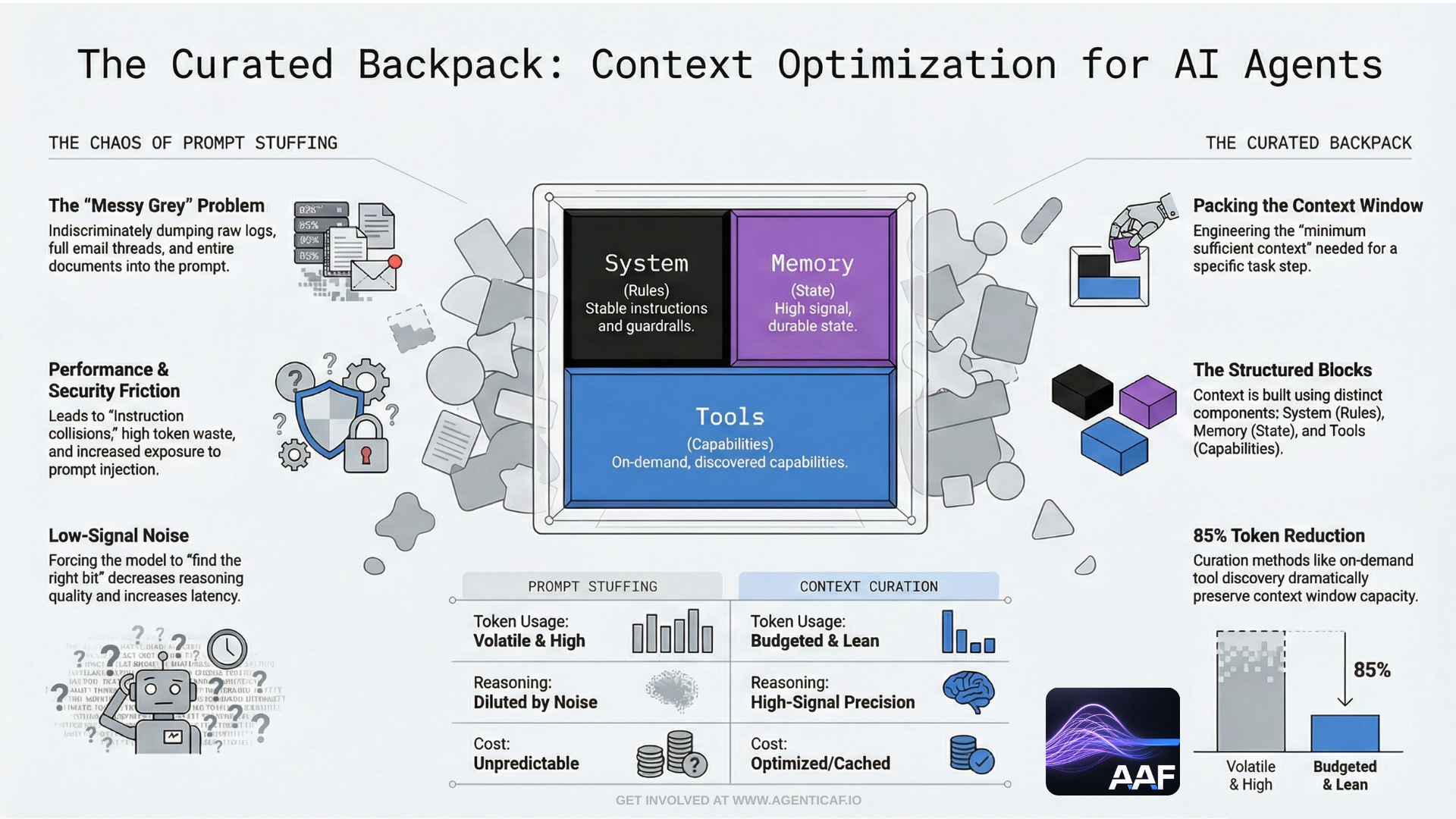

Context optimization is not a prompt-writing tactic. In agentic systems it is a foundational architectural discipline, because context is the working memory of the control loop. It determines what the agent “knows,” what constraints it can apply, which tools it can select, how it interprets instructions, and whether it can verify outcomes. It also determines cost, latency, and security exposure.

This is why context optimization cannot sit inside a single pillar. It cross-cuts:

- Security (what information is exposed; susceptibility to instruction collisions and prompt injection)

- Reliability (whether the agent has sufficient constraints and facts to succeed repeatedly)

- Cost Optimization (tokens and loop depth)

- Performance Efficiency (latency, throughput, coordination overhead)

- Operational Excellence (reproducibility, auditability, drift control)

The architectural objective is:

Provide the minimum sufficient context for the task, in a controlled and explainable form, while preserving verification signals and preventing unsafe information flow.

Anthropic’s context engineering guidance makes the underlying point practical: agent performance and stability is often less about the model and more about the quality, structure, and discipline of the context being supplied to the agent loop. (anthropic.com)

11.1 Why Context Is Different in Agentic Systems

Context optimization in agentic systems is materially harder than in single-turn LLM applications because agents:

-

operate over multiple steps, accumulating state and tool outputs,

-

invoke tools and re-ingest tool results back into context,

-

maintain memory and policies across time,

-

and may coordinate with other agents and systems.

Tool-enabled agents also consume context through tool definitions and tool results. Anthropic’s MCP engineering write-up explicitly describes that MCP clients load tool definitions into the model’s context window and that each tool call and tool result is mediated through the model during the loop. That means every additional tool, and every additional result, competes for the same bounded context budget. (anthropic.com)

This creates the core “context tension”:

-

Too little context → poor decisions, unreliable outcomes, unsafe tool use.

-

Too much context → high cost, slower performance, increased leakage risk, instruction collisions, and degraded reasoning due to noise.

11.2 Context vs Memory: A Hard Separation (Non-Negotiable)

A common architectural failure is treating “context” and “memory” as the same thing. They are not.

Context should be:

-

task-scoped,

-

ephemeral,

-

minimal,

-

disposable,

-

and curated for the specific step in the loop.

Memory should be:

-

durable,

-

high-signal,

-

policy-controlled,

-

audited,

-

and versioned or reversible.

If you do not separate them, three predictable failure modes emerge:

-

Context bloat:

Everything is shoved into the prompt “just in case,” driving cost and latency upward and making reasoning worse.

-

Memory pollution:

Low-quality or unvalidated information becomes persistent and begins to steer future tasks.

-

Security leakage:

Sensitive information that should never persist becomes part of the agent’s durable state and can be surfaced later.

NIST’s AI Risk Management framing reinforces the need for systematic validation and controls around information used by AI systems; in practice, this pushes strongly toward explicit separation and governance of what becomes durable knowledge versus transient task inputs. (nvlpubs.nist.gov)

11.3 Context Budgeting: The Engineering Discipline That Enables Autonomy

Autonomy depends on context. But context is finite. A well-architected agentic system therefore treats context like a budgeted resource—just like time, spend, or tool calls.

A practical approach is to define a context budget per task and per step, with explicit allocations such as:

-

System and policy envelope (stable instructions, guardrails, role constraints)

-

Current task state (goal, constraints, risk class, autonomy level, budgets)

-

Working set (only what is necessary to complete the current step)

-

Retrieved facts (grounded, attributed, minimal)

-

Tool definitions (as needed)

-

Tool results (filtered and normalized)

This has two important effects:

-

It prevents accidental context explosion.

-

It forces architecture decisions about retrieval, summarization, and tool discovery.

This is not theoretical. Anthropic’s Advanced Tool Use work introduces “Tool Search” precisely because loading large tool catalogs into context creates unnecessary token overhead and reduces effective capacity. They quantify the benefit: using tool search rather than loading the entire tool set can significantly reduce token usage (reported as an 85% reduction in token overhead in their example), while preserving access to the tools when needed. (anthropic.com)

The architectural rule is:

Default to minimal tool exposure and on-demand discovery.

Only introduce tools into context when the agent is likely to use them.

11.4 Retrieval vs Prompt Stuffing: Grounding Without Noise

Agentic systems often fail because they confuse “more context” with “better context.”

The right pattern is retrieval and grounding:

-

retrieve relevant documents or facts when needed,

-

attribute sources,

-

and include only the minimal excerpts required for the current step.

The wrong pattern is prompt stuffing:

-

dumping entire documents,

-

including full email threads,

-

including tool logs,

-

and hoping the model “finds the right bit.

From a systems perspective, prompt stuffing is a reliability and cost anti-pattern. It increases:

-

token usage,

-

latency,

-

instruction collision probability,

-

and the chance that irrelevant content steers the agent.

This is also a security concern: prompt injection often arrives embedded in retrieved content. If you indiscriminately inject large external content into context, you are increasing the probability that hostile instructions appear alongside genuine constraints.

11.5 Context Provenance: Knowing Where “Knowledge” Came From

In agentic systems, context sources are heterogeneous:

-

user input,

-

retrieval results,

-

tool outputs,

-

other agents,

-

memory,

-

system policies.

Operational excellence requires that this be explicitly tagged and traceable.

At minimum, an agent should know:

-

which parts of context are trusted policies,

-

which parts are external untrusted data,

-

which parts are derived from tools,

-

and which parts are memory.

This supports:

-

debugging (“why did it think that?”),

-

security controls (treat untrusted content differently),

-

and governance (audit what influenced a decision).

This is tightly related to the epistemic gate model: provenance helps determine whether an output is a hypothesis requiring validation or a verified fact eligible for authority.

11.6 Tool Result Normalization: Keep Deterministic Compute Out of the Model Context

Tool outputs can be extremely data-heavy (JSON blobs, logs, tables). Passing raw outputs back into the model context is often wasteful and destabilizing.

A well-architected agentic system uses deterministic compute to:

-

filter,

-

aggregate,

-

normalize,

-

redact,

-

and summarize tool outputs before reintroducing them into context.

Anthropic’s programmatic tool calling pattern (executing code to orchestrate tools and process outputs before sending them back to the model) is an explicit articulation of this approach: reduce token consumption and improve efficiency by processing data outside the model context. (platform.claude.com)

The design principle is:

The model should see conclusions and decision-relevant signals, not raw exhaust.

This improves cost, latency, reliability, and security simultaneously.

11.7 Context Optimization and Epistemic Gates: Prevent Probability Becoming Authority

Context determines what the agent believes to be true. If context is polluted, incomplete, or untrusted, the agent’s outputs will often sound authoritative while being wrong. The epistemic-gate architecture introduced earlier requires that we treat generated content as hypotheses until validated.

Context optimization supports epistemic gates by ensuring that:

-

validation signals are available (retrieved truth, deterministic checks),

-

untrusted content is separated from policy,

-

and the agent has the minimum information necessary to verify “done.”

NIST’s emphasis on test, evaluation, verification, and validation (TEVV) as part of trustworthy AI system practice reinforces the need for systematic validation pathways rather than relying on the model’s internal confidence. (nvlpubs.nist.gov)

11.8 A Practical Standard for Context Optimization

A useful operational standard for this foundation is:

For any agent task, we can explain what context was provided, why it was provided, how it was bounded, and which parts were trusted vs untrusted—and we can reproduce the decision context for debugging and evaluation.

If you cannot do that, you do not have context governance. You have prompt accumulation.

11.11 Design Recommendations & Trade-offs

Key recommendations

- Architect context management with explicit budgeting, just-in-time retrieval, summarization, and provenance tracking.

- Use performance-oriented context patterns like on-demand tool discovery (Tool Search) to reduce the impact of large tool catalogs on the context window.

- Provide the minimum sufficient context for the task, in a controlled and explainable form, while preserving verification signals and preventing unsafe information flow.

Cross-pillar trade-offs

- Context x Cost: Providing excessive context to an agent increases token volume, which directly increases operational costs. Architect context management with explicit budgeting, just-in-time retrieval, summarization, and provenance tracking. (source: 4.2.A)

- Context x Performance: Providing excessive context, such as large tool catalogs, increases model processing time and degrades agent responsiveness (latency). Use performance-oriented context patterns like on-demand tool discovery (Tool Search) to reduce the impact of large tool catalogs on the context window. (source: 4.2.A)

- Context x Security: Providing insufficient context can lead to unsafe tool use, while providing excessive context increases the risk of data leakage and instruction injection. Provide the minimum sufficient context for the task, in a controlled and explainable form, while preserving verification signals and preventing unsafe information flow. (source: 11.1)

By autonomy level

- Assistive:

- Context x Cost: Large context volumes increase token costs per interaction even when the human only needs a summary.

- Context x Performance: Bloated context increases suggestion latency, degrading the interactive experience.

- Context x Security: Oversized context may surface sensitive data in suggestions or omit security constraints, leading to unsafe human decisions.

- Delegated:

- Context x Cost: Plans requiring large context payloads force the approver to accept high-cost actions without alternatives.

- Context x Performance: Oversized context payloads slow execution even after approval, increasing end-to-end latency.

- Context x Security: Malicious instructions hidden in context can shape proposed plans; sensitive data may leak in plan details.

- Bounded Autonomous:

- Context x Cost: Unbounded context accumulation can exhaust token or cost budgets before the task completes.

- Context x Performance: Each reasoning step is slowed by a large context window, reducing overall loop throughput.

- Context x Security: Excess untrusted context increases prompt injection risk; insufficient context leads to unsafe tool arguments.

- Supervisory:

- Context x Cost: Passing un-summarized context between worker agents multiplies token costs at every coordination step.

- Context x Performance: Large context exchanges between agents create system-wide latency bottlenecks.

- Context x Security: Context-passing between agents can become a data exfiltration channel or a vector for cascading injection attacks.

Section 11 Citations (Sources & Links)

-

Anthropic — Effective context engineering for AI agents (context structure as a primary driver of agent performance and stability).

https://www.anthropic.com/engineering/effective-context-engineering-for-ai-agents

(anthropic.com) -

Anthropic — Code execution with MCP: building more efficient AI agents (tool definitions and tool call/results mediated through the model; context window pressure).

https://www.anthropic.com/engineering/code-execution-with-mcp

(anthropic.com) -

Anthropic — Introducing advanced tool use (Tool Search; quantified reduction in token overhead; on-demand tool discovery).

https://www.anthropic.com/engineering/advanced-tool-use

(anthropic.com) -

Anthropic Docs — Programmatic tool calling (process/filter tool outputs outside context to reduce tokens and latency).

https://platform.claude.com/docs/en/agents-and-tools/tool-use/programmatic-tool-calling

(platform.claude.com) -

NIST — AI Risk Management Framework (AI RMF 1.0) (trustworthy AI requires systematic risk management and validation; TEVV framing).

https://nvlpubs.nist.gov/nistpubs/ai/nist.ai.100-1.pdf

(nvlpubs.nist.gov)